The Meta-Repo Pattern

Software companies have spent a decade debating whether to merge their code into monorepos or keep it distributed across many repositories. Artificial intelligence is forcing the question open again, but with a different answer. AI coding agents, which now assist with a large and growing share of engineering work at many firms, often struggle to operate across the boundaries of separate repositories without explicit multi-repo context. They often cannot reliably trace a data flow across services, understand how infrastructure connects to application logic, or coordinate changes across codebases. The result is a new organizational problem: how to give a stateless machine the institutional knowledge that human engineers accumulate over months.

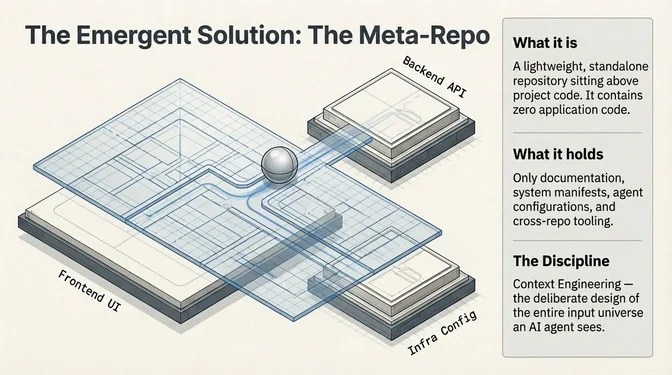

A pattern is emerging. Rather than restructuring codebases (an expensive and politically fraught undertaking), teams are building what amount to maps: lightweight repositories that sit above their project code and provide agents with the context they lack. Practitioners call them "meta-repos" or "virtual monorepos." They contain no application code, only documentation, manifests, and tooling that orient an AI agent across an entire system. This post surveys the pattern and the teams shaping it.

Three terms are worth distinguishing upfront. A true monorepo is a single repository containing the source code for many projects, sharing one commit history, one CI pipeline, and (often) one build system, as seen at Google, Meta, and tools like Nx and Turborepo. A synthetic monorepo connects separate repositories into a unified dependency graph without moving any code; each repo keeps its own history and pipeline, but a layer above understands cross-repo relationships. A meta-repo (or virtual monorepo) is lighter still: a standalone repository containing no application code at all: only documentation, manifests, scripts, and agent configuration that orient tools and humans across a set of independent repos.

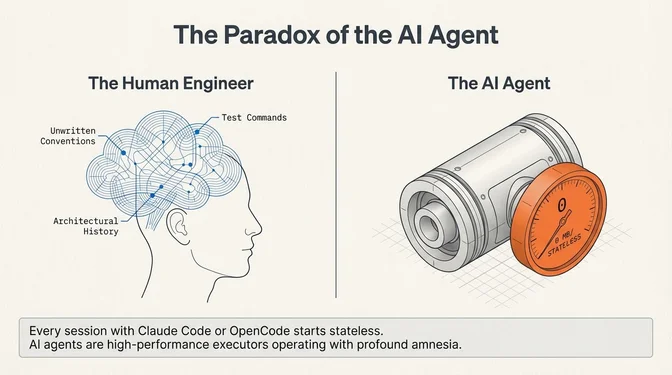

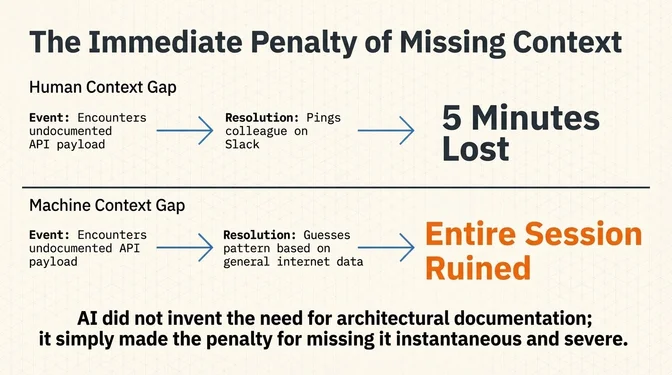

None of the disciplines described here (documentation, manifests, dependency graphs, onboarding scripts) are new. What AI agents change is the cost of not having them. A human engineer who encounters an undocumented convention loses minutes asking a colleague. An agent that encounters the same gap loses the entire session, or worse, invents a plausible but wrong answer. AI did not invent organizational hygiene; it made the penalty for missing it immediate and measurable.

Practitioners are calling this broader discipline context engineering: designing the entire input an AI agent sees: the prompt, the files it reads, the tools it can call, and the rules it operates under. Owen Zanzal, a DevOps engineer formerly at GameChanger and now at Shoplogix, argues it has replaced prompt engineering as the core skill for working with agents, and the meta-repo pattern is where that discipline meets organizational structure.

The Context Problem

Every session with a coding agent begins with a fresh context window. Persistent instructions and auto-memory carry some knowledge across sessions, but without deliberate effort, the agent has no awareness of conventions, architecture, or infrastructure that isn't explicitly documented. As Matthew Groff, a Principal AI Engineer at Umbrage (a Bain & Company studio), puts it:

Every session with Claude Code or OpenCode starts stateless. No memory of your conventions. No knowledge of your test commands. No awareness that you use bun instead of npm or that migrations go through Alembic and not raw SQL. The only thing that reliably onboards each new session is your rules file.

For a single repo, this is manageable. You write a CLAUDE.md (or AGENTS.md for Codex), document your conventions, and the agent picks them up.

For a multi-repo architecture, it breaks down. Each repo is an island. The agent working on the frontend has no idea what the backend API looks like. The agent modifying infrastructure config doesn't know which services depend on it. Cross-repo changes, the kind that matter most, require the developer to manually bridge context between sessions.

Zanzal captured this problem in his write-up on managing 35 repos with Claude Code: a single data flow might touch an ingestion service, an event bus, a stream processor, and a dashboard, living in four different repositories. An agent scoped to any one repo can only see a quarter of the picture.

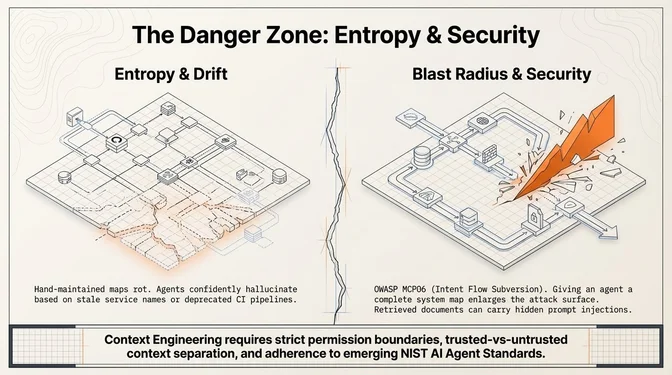

But solving the context problem introduces another risk: giving an agent a map of the whole system also enlarges the blast radius of mistakes and attacks. Retrieved documents, uploaded files, and metadata can carry hidden instructions; helper scripts and CI integrations can turn bad context into real actions. OWASP's MCP Top 10 now treats malicious instructions embedded in retrieved context as a first-order agent risk (see MCP06, Intent Flow Subversion). NIST has launched an AI Agent Standards Initiative and separately requested industry input on securing agent systems. The leading agent platforms already reflect this: Claude Code's configuration model documents deny-rules for sensitive files, configuration scopes and managed policies, hook restrictions, and MCP allowlists.

In practice, meta-repo design has to include permission boundaries, sensitive-file exclusions, trusted-versus-untrusted context separation, and review gates for high-risk operations.

Approach 1: The virtual monorepo

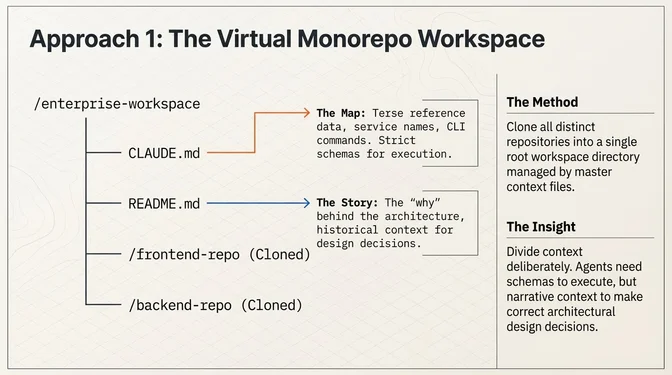

Zanzal's solution is what he calls the virtual monorepo pattern: clone all your repos into a single workspace directory, then add a CLAUDE.md and README.md at the root that gives agents the full system map.

The key insight from his approach: the CLAUDE.md provides terse, reference-oriented context (service names, connections, commands), while the README.md provides the narrative, the why behind the architecture. Together, they give the AI both the map and the story.

He notes real costs worth naming: disk space from cloning everything, keeping the manifest in sync as the team adds or renames repos, and context noise in large systems. For that last point, he suggests maintaining separate scoped workspaces rather than one giant one.

His post outlines future evolution: auto-generating the system map from service metadata, API contracts, and event schemas so the documentation stays current as the system changes.

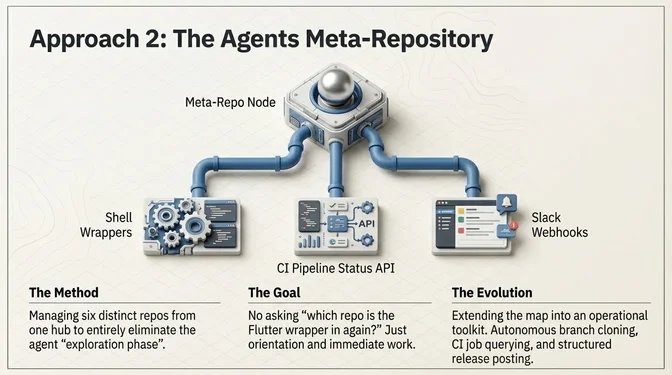

Approach 2: The agents meta-repository

Bernd Kampl, a developer at Anyline, a company that builds mobile SDKs for optical character recognition (scanning IDs, license plates, barcodes), published a detailed case study describing what they call an agents meta-repository. Their codebase spans six repositories across Android, iOS, Flutter, React Native, Cordova, and .NET, each with its own build system, CI pipeline, and release process. Their framing centers on eliminating the "exploration phase" at the start of every agent session:

I fire up an agent in our top-level workspace, give it a ticket number and tell it which product we're working on. The agent reads the meta-repo, orients itself, and we're off to the races. No exploration phase. No "which repo is the Flutter wrapper in again?" No re-reading of commit conventions. Just work.

What makes their approach distinctive is how far they push the meta-repo beyond documentation. Their meta-repo includes shell script wrappers around CLI tools that standardize common operations, CI pipeline integration so agents can query build status and inspect failed jobs, repository and merge request management so agents can clone, branch, push, and assign reviewers autonomously, and chat integration so agents can read and post to Slack channels with structured release announcements.

They explicitly address the monorepo temptation: monorepos solve some of these problems, but they also introduce build complexity, access control challenges, CI blast radius concerns, and the need to coordinate releases across teams operating at different cadences.

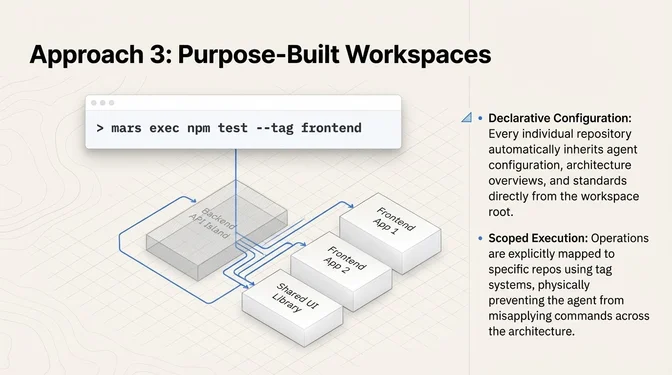

Approach 3: Mars, a dedicated workspace tool

Mars, built by Dean Sharon, is an open-source tool built specifically for this problem. It formalizes the meta-repo pattern into a CLI with a declarative config file:

repos:

- url: git@github.com:org/frontend.git

tags: [frontend, web]

- url: git@github.com:org/backend-api.git

tags: [backend, api, payments]

- url: git@github.com:org/shared-lib.git

tags: [shared, backend, frontend]Mars provides mars sync (pull latest across all repos), mars status (one table showing every repo's branch, dirty state, ahead/behind), and mars exec (run a command across repos). The tag system lets you scope operations: mars exec "npm test" --tag frontend runs tests only in frontend repos.

The key design decision: every repo inherits agent config from the workspace root. You configure your agent once (architecture overview, coding standards, service relationships) and every repo gets that context automatically. Mars also treats the workspace itself as a git repo, so the configuration is version-controlled and cloneable.

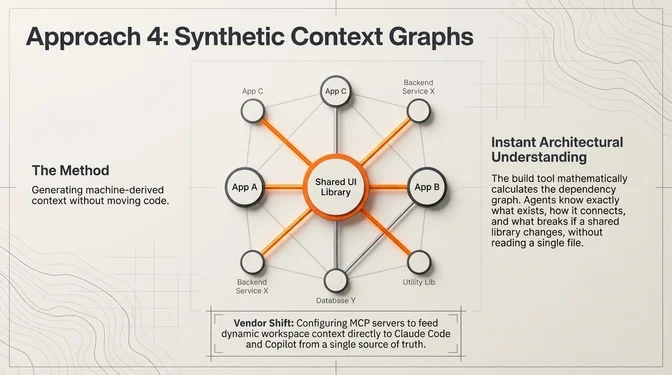

The adjacent answer: Nx synthetic monorepos

Nx, the monorepo build tool, is approaching the same problem from the opposite direction. Rather than creating a lightweight workspace above your repos, Nx connects separate repositories into a unified dependency graph, what they call a synthetic monorepo. No code moves; each repo keeps its own history and pipeline. But the graph gives agents instant architectural understanding without reading a single file: which projects exist, how they depend on each other, and what breaks if a shared library changes.

Nx recently shipped agent skills that configure an MCP server for CI integration, workspace operation skills, and a CLAUDE.md / AGENTS.md with guidelines, working across Claude Code, Cursor, GitHub Copilot, Gemini, Codex, and OpenCode from a single source of truth.

This is not a meta-repo in the sense the other examples are. This is a build-tool vendor adding agent awareness to an existing monorepo product. But it addresses the same core problem (giving agents cross-project context), and its approach to generated, machine-derived dependency graphs is directly relevant to any meta-repo that wants to keep its system map current without maintaining it by hand.

Why not just use a monorepo?

The obvious question is why anyone would build a context layer on top of separate repos when a monorepo provides that context by default. The monorepo argument is getting stronger in the age of AI agents. As monorepo.tools argues: "Repo boundaries

act as walls for both humans and AI assistants."

But most teams that adopt a meta-repo pattern are not choosing it over a monorepo on a blank slate. They already have a multi-repo architecture, and restructuring is expensive, politically fraught, and operationally risky. The meta-repo exists because it delivers most of the context benefits without requiring a migration: independent CI pipelines, release cadences, and access control boundaries all stay intact. The tradeoff is real: you get visibility via documentation rather than by default, and you lose atomic cross-repo commits entirely. But for teams where the alternative is "do nothing and let agents work blind," the meta-repo is the pragmatic move.

Where it breaks in practice

The pattern also fails in predictable ways. The most common: a root CLAUDE.md that made sense six months ago but now describes a service someone renamed, a database the team retired, or a CI pipeline that no longer exists. The agent follows stale instructions confidently; it has no way to know they're wrong. Helper scripts accumulate silently: someone adds a wrapper that hard-codes a staging URL, another contributor adds a shortcut that skips linting, and within a few months the meta-repo is encoding undocumented local habits rather than team policy. System maps maintained by hand drift from reality the moment someone deploys a new service without updating the manifest. These are not exotic failures. They are the normal entropy of any documentation layer, accelerated by the fact that agents treat documentation as ground truth rather than rough guidance.

The core risk belongs here: a meta-repo that is not actively maintained becomes a source of confidently wrong behavior at agent speed.

What this pattern does not solve

A meta-repo narrows the visibility gap, not the transaction gap. It helps an agent understand what should change across repositories, but it does not make those repositories share one history, one commit, one release train, or one integration environment. As Nx's synthetic monorepo docs make explicit, each repository stays where it sits; the benefit is a cross-repo graph, not literal code consolidation. Atomic cross-repo commits, release coordination, and integrated testing remain engineering and operational problems that need dedicated process and tooling. Google's 2025 DORA research reinforces this from an operational angle: AI can increase throughput while still harming stability when the surrounding system is weak.

Measuring whether it works

Teams adopting a meta-repo should measure the workflow, not just admire the tooling. The useful question is not whether an agent can work across the workspace once, but whether the setup improves time to first useful change, cross-repo task completion rate, review load, and production stability without increasing defects or rollback risk. Google's adoption framework for Gemini Code Assist separates adoption, trust, acceleration, and impact, a useful structure for evaluating any agent-friendly workspace. Trust and adoption metrics matter separately from throughput; a faster agent that engineers do not trust or review properly produces fragile gains.

Academic support: the codified context paper

The broader idea behind this pattern, that agents need structured, multi-tier context to operate at scale, is starting to get academic attention. Aristidis Vasilopoulos published a paper in February 2026 documenting what he calls a three-tier codified context infrastructure, developed during construction of a 108,000-line C# distributed system:

- Hot memory (constitution): a root document encoding conventions, retrieval hooks, and orchestration protocols. Loaded every session.

- Specialized agents (19 domain experts): task-specific configurations invoked per task via a trigger table.

- Cold memory (knowledge base): 34 on-demand specification documents queried through an MCP retrieval service.

The paper tracks quantitative metrics across 283 development sessions and includes four case studies showing how codified context prevents failures and maintains consistency across sessions. The paper's key finding: single-file manifests (CLAUDE.md, AGENTS.md, .cursorrules) do not scale beyond modest codebases. A 1,000-line prototype can be fully described in a single prompt, but a 100,000-line system cannot.

The paper does not study meta-repos specifically, but its core finding, that single-file context does not scale and that tiered, role-specific context infrastructure is necessary, aligns closely with the three-tier architecture Groff describes and the workspace designs from Zanzal and Kampl. It provides quantitative evidence for a claim the practitioners make from experience.

The monorepo-versus-polyrepo debate assumed the audience was human. The meta-repo is what happens when the audience is a machine, one that is fast, capable, and amnesiac. The teams that win will not be the ones with the best agents. They will be the ones whose agents know where they are.