Operating a Meta-Repo

The Meta-Repo Pattern surveys the approaches teams are using to give AI coding agents cross-repository context. This article covers the practical side: how to structure the documentation agents consume, how to govern the workspace contract, how to avoid the most common failure modes, and what tooling is emerging around these workflows.

The 3-tier documentation architecture

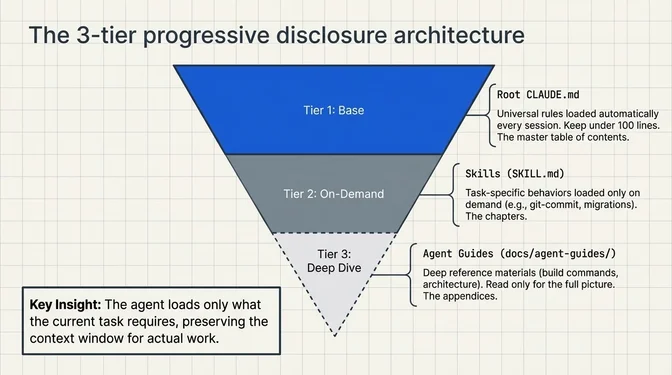

Regardless of which workspace approach teams use, a common structure is taking shape for the documentation that agents consume. Matthew Groff, a Principal AI Engineer at Umbrage (a Bain & Company studio), describes a 3-tier architecture that has circulated widely in practitioner writing:

Tier 1: Root CLAUDE.md: universal rules that apply to every task. Under 100 lines. Loaded automatically every session.

Tier 2: Skills (.claude/skills/name/SKILL.md): task-specific behavior loaded on demand. The agent reads a skill only when the task matches. One for commits, one for PRs, one for migrations.

Tier 3: Agent Guides (docs/agent-guides/name.md): deep reference material. Build commands, architecture docs, convention details. Skills point to these. The agent reads them only when it needs the full picture.

The key principle is progressive disclosure. The root file is a table of contents. Skills are chapters. Agent guides are appendices. The agent loads only what the current task requires.

Groff's starter set of five skills covers the most common workflows: build-test-verify, git-commit, create-pull-request, core-conventions, and self-review-checklist. Domain-specific skills get added as complexity demands.

Governing against context drift

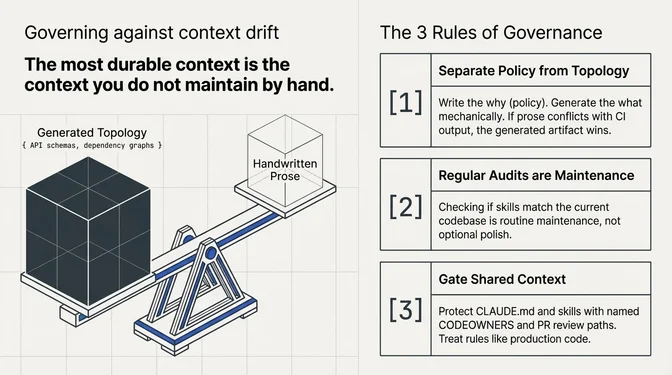

The first article describes how meta-repos fail in practice: stale manifests, silently accumulated scripts, root instructions that no longer match CI reality. The question for operators is what to do about it. Three principles show up repeatedly in the teams that keep their context layers healthy.

Separate policy from topology. Hand-written context should be short and policy-heavy, focused on why the team does things a certain way. Topology and dependency truth (what actually exists and connects) should come from source code, project graphs, or API schemas and regenerated mechanically. When prose conflicts with a dependency graph or CI output, the generated artifact wins. The most durable context is the context you do not maintain by hand.

Treat the context window as a scarce resource. Anthropic's skill authoring guidance is explicit: every token in the context window competes with conversation history and the actual request. Root instructions that grow unchecked crowd out the agent's ability to reason about the task at hand. Regular audits (does each line in the root file still apply? does each skill still match the current codebase?) are maintenance, not polish.

Gate changes to shared context the same way you gate code. The workspace contract (manifests, root instructions, skills, helper scripts) should have named owners, protected review paths, and a change trail. Without that structure, the "map" becomes an unreviewed accumulation of local habits, and every agent session inherits them.

CLAUDE.md sizing: smaller is better

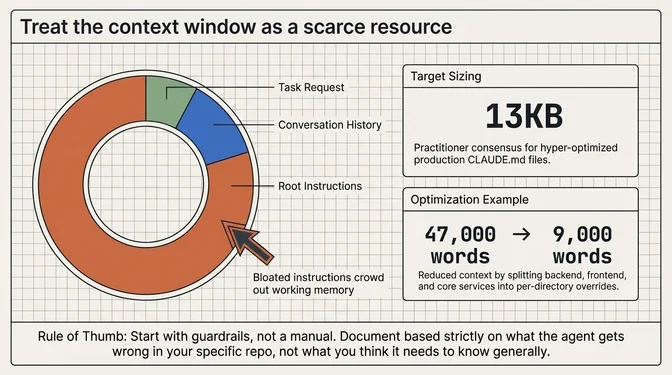

How big should your root documentation be? The practitioners writing about this most seriously all land in the same place: as small as possible.

Shrivu Shankar, a software engineer and AI researcher out of UT Austin who writes extensively about agentic coding workflows, keeps his production monorepo's CLAUDE.md at 13KB and describes allocating token budgets to each tool's documentation like selling ad space: "If you can't explain your tool concisely, it's not ready for the CLAUDE.md."

His key principle: start with guardrails, not a manual. Document based on what the agent gets wrong, not what you think it needs to know.

The official Anthropic best practices reinforce this: "CLAUDE.md loads every session, so only include things that apply broadly. For domain knowledge or workflows that are only relevant sometimes, use skills instead. Bloated CLAUDE.md files cause Claude to ignore your actual instructions."

An Vo, a fullstack developer at NFQ Asia in Ho Chi Minh City, documents reducing a CLAUDE.md from 47,000 words to 9,000 by splitting context across frontend, backend, and core services, loading per-directory overrides only when the agent navigates into those directories.

A community-maintained best practices repository by Shayan Raiss, a software engineer based in Karachi, Pakistan who works at disrupt.com, distills it further: any developer should be able to launch Claude, say "run the tests" and it works on the first try; if it doesn't, your CLAUDE.md is missing essential setup/build/test commands.

The skills ecosystem

Anthropic and OpenAI now both support SKILL.md-style skills workflows, with platform-specific integration details. Overlapping workflows exist across Claude Code, Codex CLI, Cursor, and Gemini CLI.

This has produced a wave of reusable agent configurations. The Antigravity Awesome Skills library aggregates thousands of skills. awesome-claude-code curates the broader ecosystem: skills, hooks, slash commands, orchestrators, and real-world CLAUDE.md examples from production repos like LangGraphJS and Metabase.

For meta-repo setups, skills are particularly useful because they can encode cross-repo workflows. A "deploy-to-k8s" skill can document the full path from code change to production across repos. A "create-new-service" skill can scaffold a repo, configure CI, set up monitoring, and register the service with the workspace manifest, all in one agent-driven workflow.

Git worktrees: parallel agents without collisions

One of the biggest practical trends in agent-driven development has nothing to do with documentation. It's about filesystem isolation. When you run two or three agent sessions against the same repo, they collide: one agent is halfway through modifying src/auth.ts while another rewrites the same file. Git worktrees solve this by giving each agent its own working directory with its own branch, sharing the same repository history.

Claude Code shipped the --worktree CLI flag in v2.1.49, followed by isolation: worktree support in agent definitions in v2.1.50. Cursor built their "Parallel Agents" feature directly on top of worktrees. The pattern has gone from obscure git feature to common infrastructure.

incident.io published a detailed case study about their adoption. They went from zero Claude Code usage to routinely running four or five parallel agents, each working on different features in isolated worktrees. Their key insight: worktrees let you treat AI coding sessions like long-running processes: ongoing, focused dialogues about specific features, with all the context preserved in the branch and worktree.

A whole ecosystem of worktree tooling has appeared. Upsun's developer center cataloged the ecosystem, which includes tools like agentree for quick worktree creation, ccswarm for coordinating specialized agent pools, and Parallel Code, a desktop app by Johannes Josephs for running Claude Code, Codex CLI, and Gemini CLI sessions side by side, each in its own worktree.

For meta-repo setups, worktrees interact in an interesting way. You might have an agent working on the backend in one worktree while another agent handles the frontend in a different worktree, both inheriting the same root CLAUDE.md context from the workspace. The meta-repo provides the system map; worktrees provide the isolation.

Architecture decision records as agent context

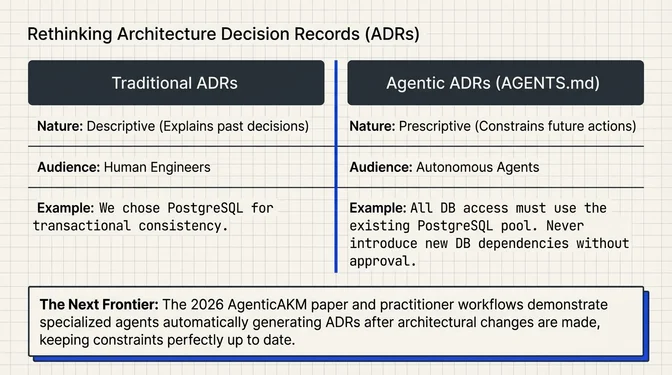

Teams are rethinking architecture documentation. Traditional Architecture Decision Records (ADRs) capture the why behind past decisions for human readers. But as Faisal Feroz, a software engineer writing about agentic architecture, argues, architecture documentation is shifting from describing past decisions for humans to prescribing constraints for agents generating code autonomously.

The distinction is between descriptive documentation ("We chose PostgreSQL because we need transactional consistency") and prescriptive documentation ("All data access must go through the existing PostgreSQL connection pool. Never introduce new database dependencies without explicit approval"). ADRs are descriptive. CLAUDE.md and AGENTS.md are prescriptive. Teams are finding they need both.

A developer named Adolfi took this further by adding a single instruction to his agents.md: "Always create an ADR when changes to the codebase affect the architecture." Now the agent itself generates ADRs after implementing architectural changes, while the context is fresh.

This has academic backing too. A 2026 arXiv paper, AgenticAKM, demonstrated a multi-agent system for automated ADR generation. Specialized agents for code analysis, retrieval, generation, and validation collaborate to produce ADRs from code repositories. Validated across 29 repositories, the system generated better ADRs than a single LLM call alone.

A community-written implementation guide on HuggingFace, building on Anthropic's 2026 Agentic Coding Trends Report, prescribes a specific repo structure that includes ADRs as a first-class component: docs/adr/ with stable IDs, golden path templates, standardized build entrypoints, and a machine-readable CODEOWNERS map. The ADRs serve dual duty: history for humans, constraints for agents.

Active work as extended memory

One pattern that distinguishes the more mature meta-repo implementations is using the workspace as session-spanning working memory. Most discussions of CLAUDE.md focus on static reference: conventions, commands, architecture. But Bernd Kampl's write-up from Anyline (a mobile OCR SDK company managing six repos across Android, iOS, Flutter, React Native, Cordova, and .NET) includes an entire section on what he calls "Active Work as Extended Memory."

The idea: when an agent is working on a multi-session task (say, updating a shared schema across six repos), it writes progress notes, decisions made, discoveries, and remaining work into markdown files inside the meta-repo. The next session reads those files and picks up where the last one left off. It's not auto-memory (the small persistent scratchpad that agents maintain natively). It's structured, version-controlled, human-reviewable documentation of in-flight work.

Kampl is explicit about why this matters: "Yes, this helps the agent remember, but the real benefit is for the humans: an auditable trail you can review, correct, and learn from."

Some teams formalize this with a tickets/ directory inside the workspace that tracks implementation work alongside learnings and gotchas. When a task is complete, the ticket becomes a knowledge base entry, reusable context for any future session.

Agents amplify discipline, for better or worse

There's a framing that cuts across these approaches, articulated in TechTarget's hands-on review of AI agents:

Organizations with strong foundations in software engineering practices, GitOps, CI/CD, test automation, platform engineering and architectural oversight can channel agent-driven velocity into predictable productivity gains. Organizations without these foundations will simply generate chaos quicker, as AI agents are indifferent to whether they are scaling good practices or bad ones.

A CLAUDE.md that says "always run tests before committing" will produce tested code at agent speed. A workspace with no such constraint will produce untested code at agent speed. The earlier sections cover the governance mechanics for keeping context healthy, but the deeper point is about judgment. As Kampl puts it in his Anyline write-up: "The agent doesn't replace judgment — it gives you super powers. You still need someone who knows the codebase, understands the architecture, and can look at a plan and say 'no, that merge order will break the Flutter build.'"

Building a meta-repo: a starter checklist

Six elements that show up repeatedly in mature implementations:

(JSON, YAML) listing every repo with its URL, default branch, and tags. Drives the clone script and scopes cross-repo operations.

- Repo manifest: a machine-readable file

- Root instructions: a concise

CLAUDE.mdorAGENTS.md(under 100 lines) documenting universal conventions, infrastructure topology, and pointers to deeper docs. Loaded every session. - Generated dependency or system map: derived from source code, project graphs, or API schemas rather than maintained by hand. Keeps topology current without manual upkeep.

- Permission boundaries: deny-rules for sensitive files, trusted-versus-untrusted context separation, hook restrictions, and MCP allowlists. The meta-repo is a policy surface, not just a navigation layer.

- Named ownership: CODEOWNERS on the workspace contract, protected branches, and required reviews before changes to shared rules or manifests merge.

- Approval gates for risky actions: plan-first modes for cross-repo changes, human review for deployment-affecting operations, and standardized PR templates so agent-authored changes get the same scrutiny as human-authored ones.

None of these require exotic tooling. A git repo, a markdown file, a JSON manifest, and a CODEOWNERS file will get you most of the way there.

What's coming next

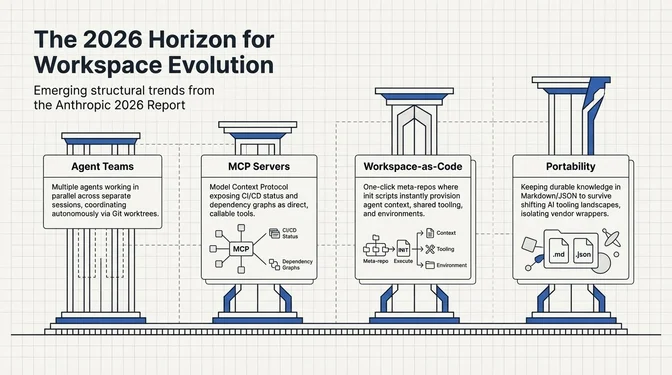

Anthropic's 2026 Agentic Coding Trends Report identifies eight trends defining how software gets built this year. Four areas demand immediate attention: mastering multi-agent coordination, scaling human-agent oversight through AI-automated review, extending agentic coding beyond engineering teams, and embedding security architecture from the earliest stages. For meta-repo practitioners, four of these translate directly into workspace evolution:

Agent teams and parallel execution

Claude Code now supports agent teams, agents working in parallel across separate sessions, coordinating via git. Combined with worktree isolation and a meta-repo that defines the workspace, you could have one agent working on the backend while another handles the frontend, both sharing the same system context, each in their own worktree.

MCP servers as workspace infrastructure

Model Context Protocol servers can expose workspace operations (repo status, dependency graphs, CI results) as tools the agent calls directly. Nx already ships an MCP server that connects agents to CI. Expect more tools to follow this pattern, especially for meta-repo operations like cross-repo status checks and coordinated branching.

Workspace-as-code

The meta-repo itself becomes a versioned, shareable artifact. New team members clone it, run an init script, and have a fully configured workspace with agent context, all repos, and shared tooling. This is already standard practice for the teams described above.

ADRs as living agent constraints

As the line between "documentation for humans" and "instructions for agents" blurs, expect more teams to maintain ADRs that serve both audiences, capturing the why for future engineers while encoding the must for current agents.

Portability across agent runtimes

The tool surface is still moving quickly. Anthropic uses CLAUDE.md, scoped settings, managed hooks, and MCP restrictions; OpenAI Codex exposes AGENTS.md and its own approval modes; Nx generates configurations for six different tools from a single source of truth. Teams should assume that today's agent runtime may not be the one they use a year from now. The safest long-term design keeps durable organizational knowledge in portable formats (markdown, JSON manifests, generated metadata) while isolating tool-specific wrappers in a thin, swappable layer.